Django 5 Travel Booking Site Generation Test with Qwen3.5-122B-A10B Local Inference

Testing whether a locally-running Qwen3.5-122B-A10B (Q5_K_M) can generate a full-stack Django 5 web application via MCP agent — no cloud API used

Overview

This article documents a test to determine whether a locally-running Qwen3.5-122B-A10B (Q5_K_M quantization) can generate a full-stack Django 5 travel booking site — with zero cloud API calls. Using an MCP (Model Context Protocol) coding agent for file operations, the model handled initial one-shot generation (with manual fixes), followed by two rounds of feature expansion adding Shop, Reviews, Search, and Accounts apps. Each stage required some manual corrections, but the bulk of code generation was completed entirely by local inference.

Cloud-scale models like Claude or GPT-4 can already do this kind of work routinely. The focus here is whether a quantized MoE model running on local hardware can achieve comparable results.

Local Inference Environment

| Item | Details |

|---|---|

| Model | Qwen3.5-122B-A10B (MoE, 10B active / 122B total parameters) |

| Quantization | Q5_K_M (GGUF, 3-shard split) |

| Inference Engine | ik_llama.cpp (OpenAI-compatible API server) |

| MCP Tools (custom) | ctree (code symbol analysis), pathfinder (path resolution) |

| MCP Tools (OSS) | serena (semantic code operations), filesystem (file read/write), ripgrep (search) |

| Context Usage | ~77K prompt tokens |

The key point: all inference ran on local hardware. No external API calls, no token billing. The agent combined multiple MCP servers — serena for symbol operations, filesystem for file I/O, ripgrep for search, ctree for code structure analysis, and pathfinder for path resolution — to incrementally build the Django application.

Motivation

Why Test Locally Instead of Using Cloud APIs

Claude Sonnet and GPT-4o can already generate full-stack web applications reliably. But relying on cloud APIs means:

- Token costs accumulate over iterative development

- Proprietary code must be sent to external servers

- API availability and rate limits constrain workflow

If local inference can deliver cloud-comparable results, these constraints disappear. The question was: can a 122B MoE model quantized to Q5_K_M and running on ik_llama.cpp function as a practical coding agent?

One-Shot Generation Approach

A complete specification was provided upfront to generate all files at once. This ensures:

- Consistency between domain models and views

- Template-form integration matching the specification

- Seed data aligned with model definitions

- Admin configuration consistent with model fields

Specification Design

Technology Stack

| Component | Choice | Rationale |

|---|---|---|

| Backend | Django 5.x | Python 3.13 compatible, full ORM, auto-generated admin |

| Frontend JS | Alpine.js v3 (CDN) | Works within Django templates, no build step |

| CSS | Tailwind CSS | Material Design 3 inspired utility-first approach |

| Package Manager | uv | Fast Python package manager |

| Database | SQLite | Development only, zero configuration |

Initial Domain Models

The initial specification defined three entities for the core booking flow:

TravelPackage 1 ──── N Tour

Tour 1 ──── N Reservation

TravelPackage (travel product):

- Title, slug, region, duration, image URL

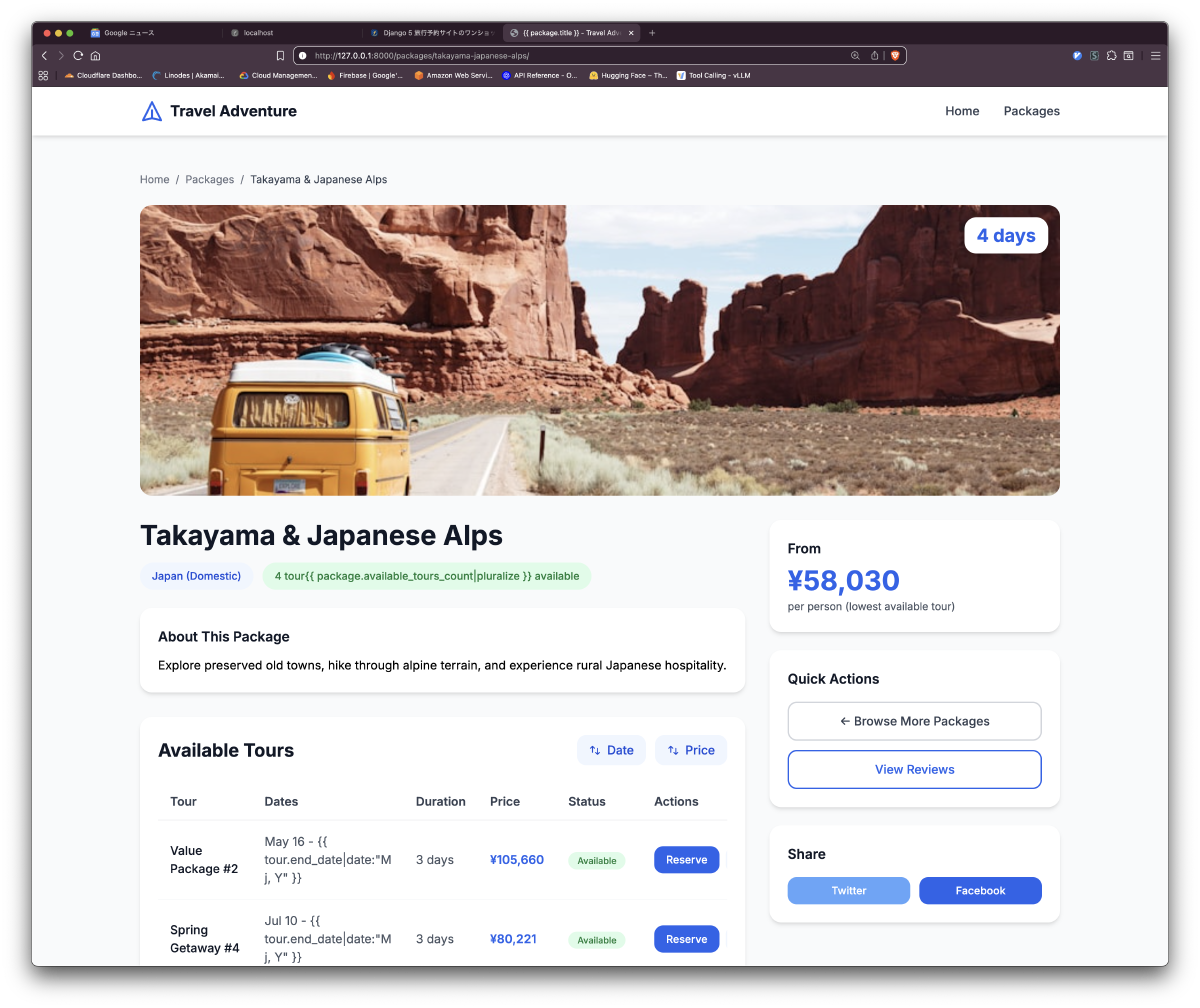

is_publishedflag for visibility controlmin_priceproperty for dynamic lowest tour price retrieval

Tour (scheduled departure):

- Specific departure/return dates, price, remaining seats

- Status management (available / soldout / cancelled)

is_reservableproperty for reservation eligibility

Reservation (booking):

- Customer information (name, email, phone, guests, notes)

- FK relationship to Tour

- No payment processing (DB persistence only)

Business Rules

The specification explicitly defined the following rules:

- Only packages with

is_published=Trueappear on the frontend - Only tours with

status="available"can be reserved - Price display shows the lowest tour price within a package (

Minaggregation) - Reservation flow follows a 3-step pattern: form → confirmation preview → completion

- Database write occurs only on the confirmation POST (via session storage)

UI Wireframes

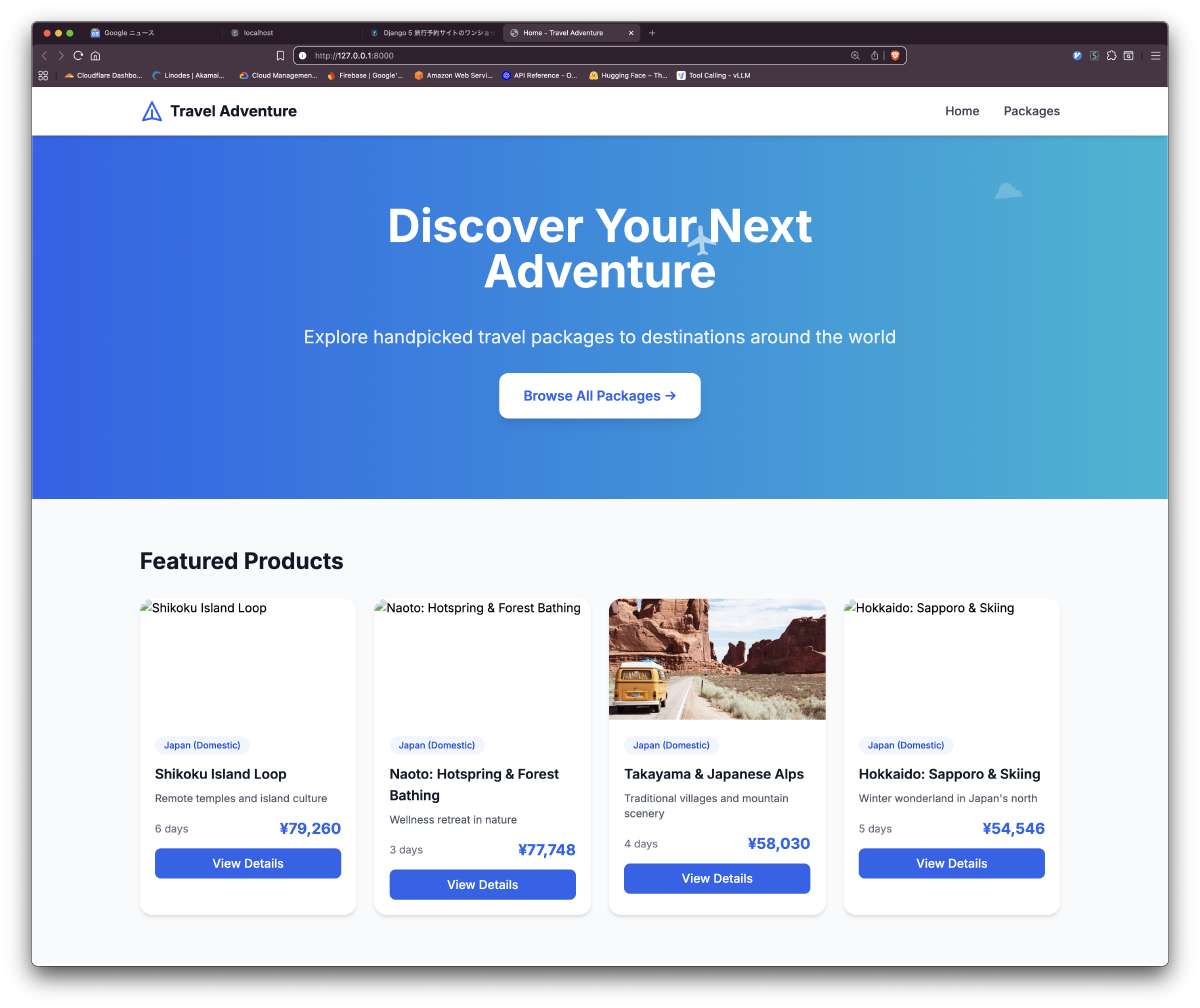

Layouts were defined as ASCII art, enabling the LLM to understand visual structure and apply appropriate Tailwind classes:

┌─────────────────────────────────────────────────┐

│ HERO SECTION: bg-gradient-to-r from-blue-600 │

│ [SVG airplane animation flying across] │

│ "Discover Your Next Adventure" │

│ [ Browse All Packages → ] │

├─────────────────────────────────────────────────┤

│ FEATURED PACKAGES (card grid) │

│ ┌──────┐ ┌──────┐ ┌──────┐ │

│ │Card 1│ │Card 2│ │Card 3│ │

│ └──────┘ └──────┘ └──────┘ │

└─────────────────────────────────────────────────┘

Material Design 3 Specifications

| Role | Tailwind Class | Usage |

|---|---|---|

| Primary | bg-blue-600 | Buttons, links, active states |

| Surface | bg-white | Cards, modals, form backgrounds |

| Elevation Level 2 | shadow-md | Main cards, header |

| Border Radius | rounded-2xl | Cards; rounded-xl for buttons |

Hover effects: hover:shadow-lg hover:-translate-y-1 transition-all duration-200

Code Generated by Local LLM

Alpine.js Filtering

The agent read the Alpine.js specification and generated client-side filtering:

x-data="{

region: '',

maxPrice: ''

}"

x-show="(region === '' || $el.dataset.region === region) &&

(maxPrice === '' || parseInt($el.dataset.minPrice) <= parseInt(maxPrice))"

This server-API-free approach demonstrates that the local model correctly understood Alpine.js reactive patterns.

Session-Based Reservation Flow

The 3-step reservation flow was generated as specified:

- Form input (

/reserve/<tour_id>/): After validation, data is saved to session - Confirmation preview (

/reserve/<tour_id>/confirm/): Read from session, display summary - Completion (

/reserve/success/): Confirmation POST saves to DB, clears session

# ReservationCreateView: POST saves to session

request.session['reservation_data'] = form.cleaned_data

return redirect('reservation_confirm', tour_id=tour.id)

# ReservationConfirmView: POST saves to DB

data = request.session.pop('reservation_data')

Reservation.objects.create(tour=tour, **data)

return redirect('reservation_success')

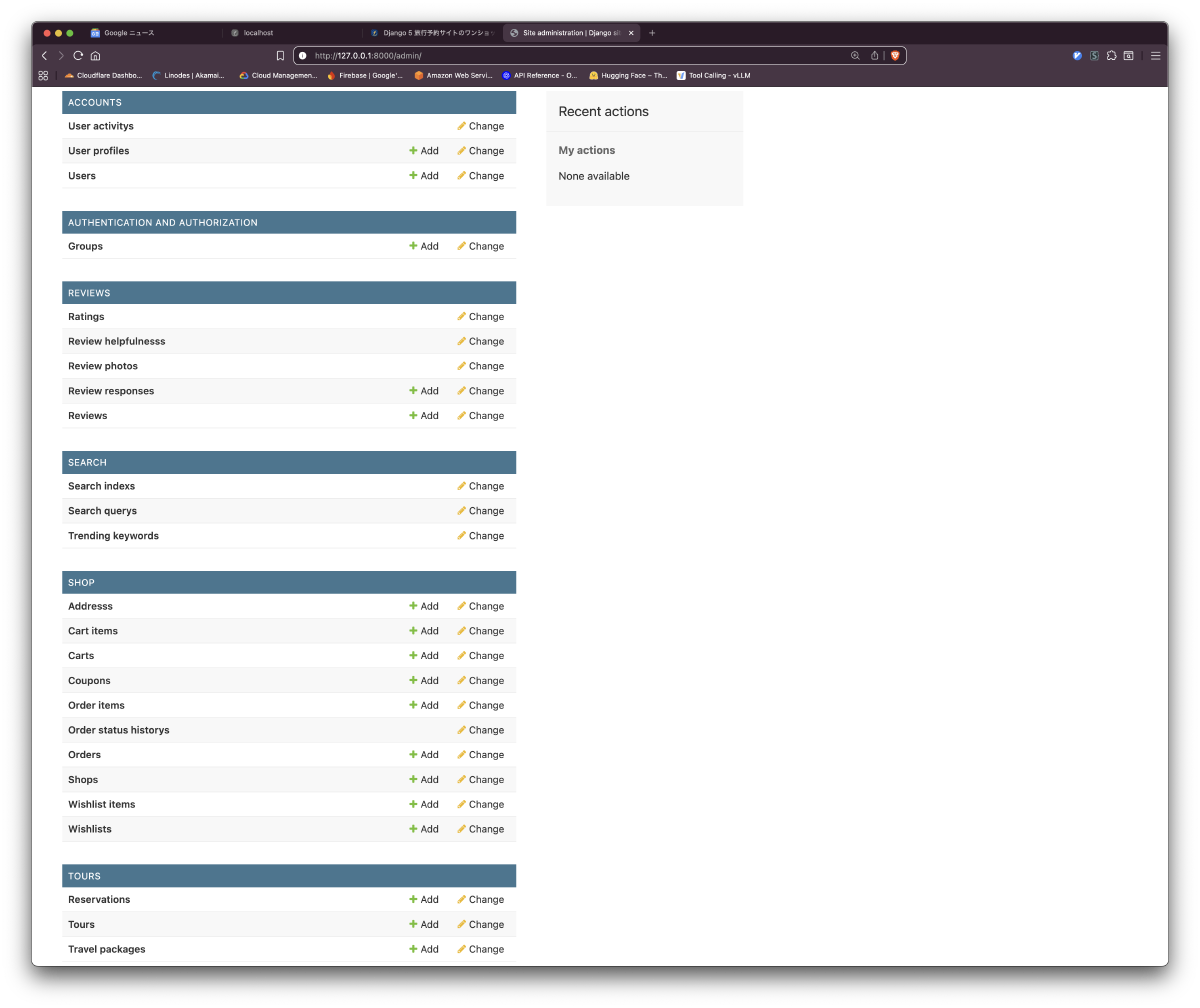

Django Admin Configuration

Admin configuration including inline editing was generated consistently with model definitions:

class TourInline(admin.TabularInline):

model = Tour

extra = 1

@admin.register(TravelPackage)

class TravelPackageAdmin(admin.ModelAdmin):

list_display = ["title", "region", "duration_days", "is_published"]

list_editable = ["is_published"]

prepopulated_fields = {"slug": ("title",)}

inlines = [TourInline]

Seed Data

The seed_demo management command auto-generates 5 packages (14 tours total) across different regions for filter testing.

Agent-Driven Incremental Expansion

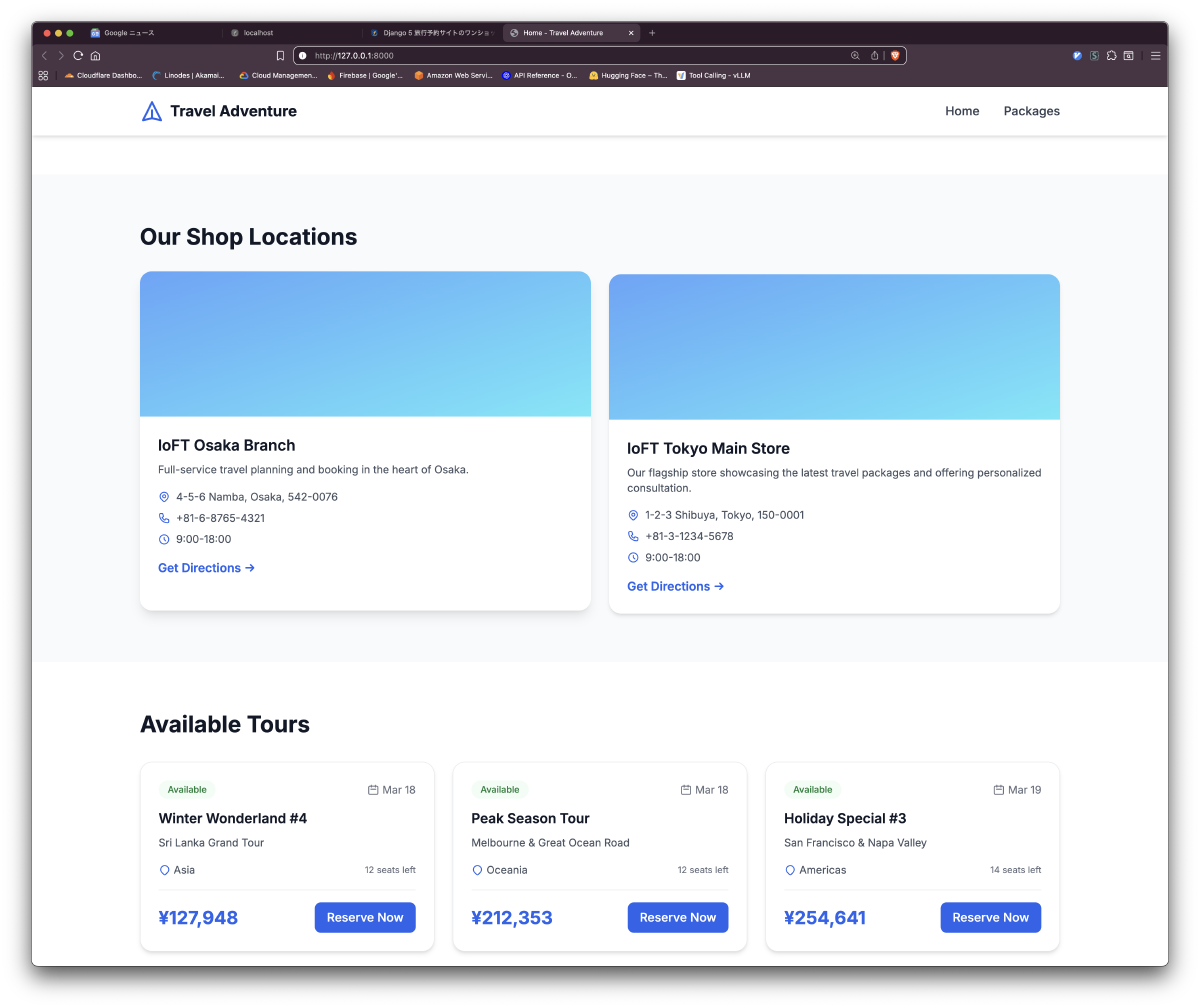

After the initial one-shot generation and manual fixes to stabilize the base, two additional feature expansion requests were made. The agent read existing code and added the following apps (with manual corrections required at each stage):

| Added App | Key Models | Agent’s Work |

|---|---|---|

shop | Shop (store locations) | Added Shop model to models.py, registered admin, created templates |

reviews | Review, Rating, ReviewPhoto | Full review system with approval workflow |

search | SearchIndex, TrendingKeyword | Search index and trending keyword management |

accounts | User, UserProfile, UserActivity | User authentication and profile management |

The agent read existing tours/models.py to verify FK relationships and maintained consistency with new models. However, template quote escaping, some missing imports, and cross-template inconsistencies required manual fixes at each stage. Rather than fully automatic generation, the workflow settled into roughly “80% agent-generated, 20% human-corrected.” Still, the fact that this “read existing code, understand context, then extend” behavior worked on a local MoE model is noteworthy.

Generation Results

Screenshots

Initial Generated File Structure

| File | Content |

|---|---|

tours/models.py | 3 models + properties + validation |

tours/admin.py | Admin configuration for 3 models + inline |

tours/forms.py | ReservationForm (ModelForm) |

tours/views.py | 6 views (Home, List, Detail, Form, Confirm, Success) |

tours/urls.py | URL pattern definitions |

tours/management/commands/seed_demo.py | Seed data |

tours/templatetags/tour_filters.py | Custom filter (multiply) |

tours/templates/tours/*.html | 7 templates |

static/css/custom.css | SVG animations |

config/settings.py | INSTALLED_APPS additions |

Verification

After manual corrections, the following functionality was confirmed:

- Server started after migration

- Seed data populated packages on the frontend

- Alpine.js filtering and sorting functioned correctly

- Reservation flow (input → confirm → complete) completed successfully

- Admin inline editing worked as specified

Key areas requiring manual fixes:

- Django template tag quote escaping on the package detail page (

{{ tour.end_date|date:"M j, Y" }}rendered as raw strings) - Some missing imports and type inconsistencies

- Cross-template consistency during feature expansion

SVG Animation

The hero section features an airplane flight animation (8-second loop) and floating clouds (15-second loop) generated as SVG with CSS keyframe animations.

Key Learnings

1. Local MoE Model Coding Capability

Qwen3.5-122B-A10B (Q5_K_M) generated coherent Django models, views, templates, and admin configuration. Even during incremental expansion using 77K tokens of context, consistency with existing code was maintained. Not quite cloud-model quality, but with clear specifications, the results were practically usable.

2. Specification Detail Determines Local LLM Accuracy

To get reliable output from a local LLM, specification quality is critical. Particularly important:

- Domain model field definitions (types, constraints, defaults)

- URL pattern to view mapping tables

- UI wireframes (ASCII art format is sufficient)

- Specific Tailwind class designations

Cloud-scale models often produce “good enough” output from vague instructions. Local models translate specification ambiguity directly into output inconsistency.

3. Multi-MCP Server Coordination

The combination of custom tools (ctree for code symbol analysis, pathfinder for path resolution) and OSS tools (serena for semantic code operations, filesystem for file I/O, ripgrep for search) worked reliably with the local LLM. The agent used serena to search symbols, filesystem to read/write files, and ctree to understand code structure — autonomously coordinating across multiple MCP servers.

4. Template Escaping Issues

Django template tag quote handling was an area where the local LLM struggled. Filter arguments with quotes like {{ value|date:"M j, Y" }} were sometimes not correctly escaped during generation. This can occur with cloud models too, but was somewhat more frequent with the local model.

Summary

- Full web app from local inference only: Qwen3.5-122B-A10B (Q5_K_M) built a full-stack Django site with zero cloud API calls

- Incremental expansion capability: Extended from 3 initial models to 6 apps while maintaining existing code context

- MCP agent integration: Local ik_llama.cpp + multiple MCP servers (ctree / pathfinder / serena / filesystem / ripgrep) proved practically functional

- Specification quality drives local LLM accuracy: Clear specifications matter even more than with cloud models

- Manual fixes at each stage: Not fully automatic, but an 80/20 agent-to-human ratio proved sufficient to build a full-stack app