Profile

Koushuu Matsubara (ksh3) — founder of loFT LLC. A solo engineer handling cloud infrastructure, AI/LLM platforms, and full-stack development end-to-end.

About the Founder

Koushuu Matsubara

loFT LLC CEO / System Architect

My Story

I started working as a sole proprietor in 2014 while still employed full-time. Since incorporating in 2019, thanks to the generous support of my clients, I’ve been able to keep doing what I love — building systems. I’m genuinely grateful for every opportunity.

After spending about five years working deeply on a single long-term service, I felt a growing urge to take on new challenges. Seeing the rapid maturation of large language models — especially the potential of running QwenNext-class models locally last year — I decided to dedicate 2026 to research and development, and left the team.

To that end, I built a serious local infrastructure: a hypercomputer that’s frankly overkill for a solo operator. I’ve been immersed in local LLM optimization every day since. Fortunately, I managed to purchase the workstation before memory prices spiked — good timing.

Currently I’m pursuing local LLM optimization research, implementing various MCP tools in Rust for multi-model and open-source model workflows, and steadily refining my local development infrastructure.

Much of my time is currently dedicated to R&D, so I may not always be available right away — but if it’s in an area I find genuinely interesting, I’d love to hear about it. My strengths are service/system design and development across the board. Having worked across many languages and architectures, I can draw on a wide range of technologies to make the right choices for your project. Research-oriented work isn’t my core background, but given the opportunity, I believe I can bring a different perspective and deep technical experience to find practical solutions.

Character & Personality

I’m a lone-wolf type who loves contemplating system design and structure. I find joy in the process of persistently working to understand something I don’t yet know. “Why is it designed this way?” “I want to design this mechanism like so.” — digging into the details is genuinely fun, and I feel every day that this work is my calling. I enjoy walking through the park, looking at trees and thinking about how nodes, branches, and leaves are structured and how they influence each other. I took the MBTI twice at different times, and the result didn’t change — I mostly agree with the description, so I take it as a reasonable reference. People around me say I’m easygoing and laid-back. I’m originally from Kagoshima, and to this day my katakana intonation sometimes doesn’t land — it becomes a running joke, and honestly, I think it works in my favor.

Things I Like

Linux in general, custom PCs & custom keyboards, distributed systems, complex programs, system design/architecture, reading design philosophies, design, programming, photography, cooking, park walks, contemplation.

Development Style & Current Tech Stack

Most of my current development revolves around APIs, tools, and cross-platform apps (Flutter/Tauri) written in Go, Rust, Python, and Dart. For UI, I typically use SolidJS, Alpine.js, React, and Tailwind. On the design side, I used Sketch and Figma from the early days of Material Design. Lately I haven’t had much design work, but I’m keen to try out Figma MCP tools — they look really useful.

I enjoy adopting new technologies — Rust and Go in particular feature heavily in my recent projects. At the same time, I continue to maintain and improve a long-standing client’s Django-based core system.

Infrastructure is almost always microservices. I design with container-based operations in mind. While GCP is my primary platform, I’ve been actively adopting Cloudflare as well in recent years. I’ve been deploying GCP as client infrastructure since around 2015.

Design Philosophy

My design philosophy is simple:

- Borrow from libraries rather than writing code from scratch

- Compose with OSS as building blocks

- Build structures that assume change

- Start with a short-term prototype to validate the concept, assemble the overall architecture with high-yield configurations, and ultimately prioritize long-term durability (ease of change) on a Linux foundation

Local Development Infrastructure

I aim to keep my development environment entirely local. For LLM R&D in particular, fast local iteration is critical. I’ve built a homelab with the following setup. Depending on the project, I can also attach LUKS-encrypted external storage or isolate the compute server from the network for analysis.

I’ve loved computers since childhood — I’ve been building PCs since the Athlon and Pentium 3 era. Since I sometimes need Windows builds for work and also wanted a ROCm environment, I built a desktop PC with pluggable SSDs for Windows/Linux dual-boot. I’ve been using vim keybindings for as long as I can remember, so a keyboard that fits perfectly — the Claw44 — is essential. Before I knew it, I’d bought two spares.

Compute Server (Ubuntu 24.04.3 LTS)

CPU: AMD EPYC 9175F (16-core 4.2GHz–5GHz L3 512MB) GPU: Nvidia RTX PRO 6000 MAX-Q (300W) 96GB x 2 RAM: DDR5-6400 64GB x 12 (768GB) M/B: Supermicro H13SSL-NT Storage1: SATA 6Gbps 3.84TB Storage2: M.2 PCIe4.0 3.84TB NIC: 10GbE x 2

Desktop PC (macOS latest)

CPU: M1 Ultra RAM: 64GB Storage: 1TB NIC: 10GbE x 1

Storage Server (Ubuntu 24.04.3 LTS)

CPU: Mac mini Late 2018 Core i3 RAM: DDR4 SO-DIMM 8GB Storage: SSD 2TB, 4TB, HDD 24TB NIC: 1GbE x 1

The Mac mini Late 2018 is x86, so I replaced the OS with Ubuntu and run it 24/7. My apartment is on VDSL (slow), so I download TB-scale data from Hugging Face overnight.

Router + Switch (RouterOS)

VDSL -> Router

Wi-Fi7 -> mobile ssid, IoT ssid

DHCP Server

| -> CRS304 (Router+Switch) 192.168.0.2

| GW: 10.10.10.1

|-- Port1 → Desktop PC 10.10.10.2

|-- Port2 → Storage Server 10.10.10.3

|-- Port3 → Compute Server 10.10.10.4

|-- Port4 → netgear wifi6 AP (DHCP Server) 128.0.0.1

|-- Port5 → WAN (1GbE DHCP Client) 192.168.0.2/24

2026 Theme

As of 2026, LLMs are no longer just a trend — they’ve entered an inflection point of industrial transformation. This year, I left a team I’d been part of for about five years to focus on R&D for local LLM infrastructure, data platforms, and AI environments.

Active use of AI has become the norm. But the more you use LLMs daily, the easier it is to just “use and move on.” Without systems to observe and analyze the usage process, know-how from AI adoption never accumulates. That’s close to letting insights slip away that could otherwise be turned into assets.

Just as data collection and curation became a massive theme in AI development after the fact, “organizing usage data” will soon become critical in LLM adoption as well. Cloud LLMs — Claude in particular — are indispensable, but the more you delegate to agents, the more the decision-making process and know-how become a black box, and the harder it is to retain data and insights in-house. That’s exactly why I believe a local LLM infrastructure is necessary — as a path to recapturing usage data in an observable form and connecting it to company-focused fine-tuning.

So right now, my top priority is building the infrastructure that serves as a “factory” for AI/LLM usage observation, analysis, application, data distillation, and synthetic data — and I’m fully focused on it.

Technical Areas

- Backend: Python (Django, FastAPI, Starlette), Go (Gin), Rust (Axum), Node.js (Express), C# (.NET Core)

- Frontend: TypeScript, React, Next.js, SolidJS, Alpine.js, Tailwind CSS, Bootstrap, UIkit, Material UI (major Material Design component libraries)

- Mobile / Desktop: Flutter (iOS/Android/Web), Tauri (desktop SPA + Rust/Axum backend)

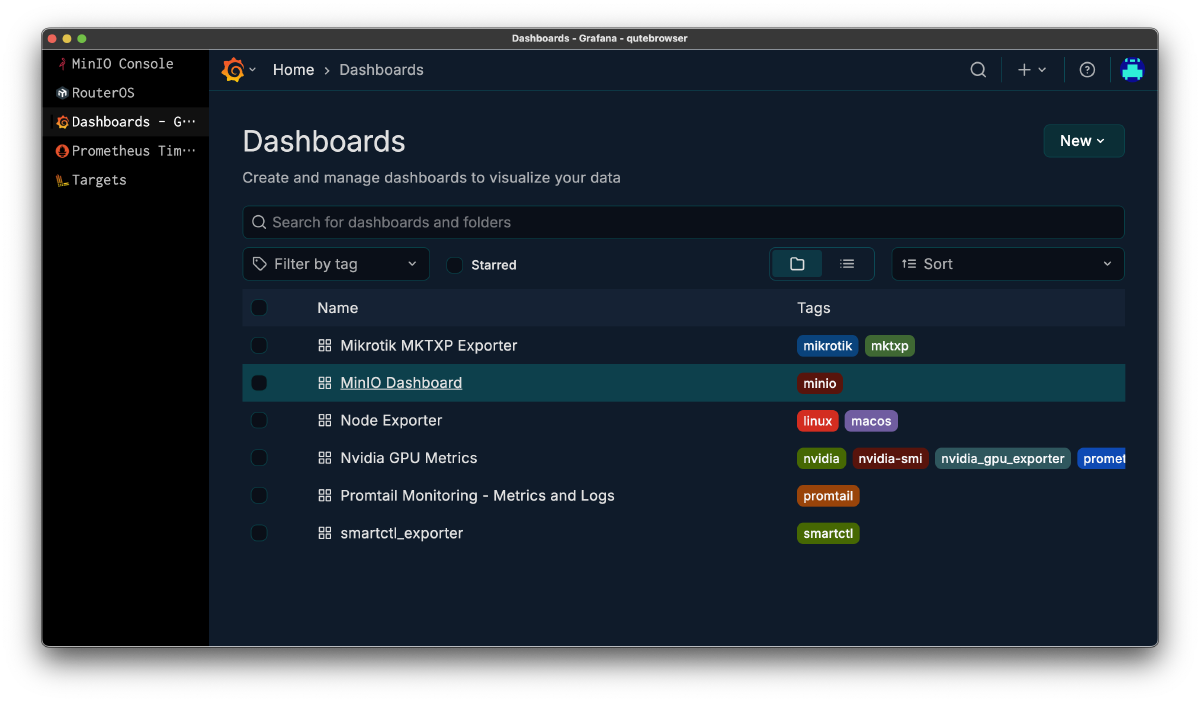

- Infrastructure / SRE: GCP, AWS, Azure, Cloudflare, Akamai/Linode, Linux (Ubuntu, Debian, CoreOS), Kubernetes (GKE), Docker/Podman, Observability (Prometheus, Grafana, Loki, Promtail, exporters)

- Data Platform: PostgreSQL, Apache Iceberg, Trino, dbt, Nessie, Object Storage (Cloudflare R2, S3, GCS)

- AI / LLM: llama.cpp, vLLM, ONNX, OpenAI-compatible API server operations, local LLM operations & quantization (Qwen/DeepSeek/Gemma/MiniMax/Llama/Kimi etc.), MCP tool development, GPU computing environment setup & operations, synthetic data/data distillation pipeline design

- Dev Environment / Tools: VSCode Server, Zed, Git (self-hosted Gitea)

- Design: Figma, Sketch

Company Overview

| Item | Details |

|---|---|

| Company | loFT LLC (loFT合同会社) |

| CEO | Koushuu Matsubara |

| Established | August 9, 2019 |

| Location | Musashino-shi, Tokyo, Japan |

| Capital | ¥9.5 million |

| Business | Cloud infrastructure design & operations, AI/LLM platform development, web application development, mobile app development, IT consulting |

Our Capabilities

Cloud Infrastructure

Multi-cloud design with GCP, AWS, Azure, Cloudflare, and Akamai. End-to-end support covering Kubernetes (GKE), container orchestration, serverless architecture, 10GbE networking, and Prometheus/Grafana monitoring.

AI & LLM Infrastructure

I build and operate on-premises GPU computing environments. My work includes inference optimization with llama.cpp, quantization testing, and benchmarking of large-scale models such as DeepSeek, Qwen, Kimi, GLM, and Llama.

Leveraging open-source LLMs, I provide end-to-end technical support and development across the AI/LLM stack, from system and infrastructure design to application development.

System Development

Web applications, REST APIs, and microservices built with Python, Go, TypeScript, Rust, and Dart. Framework expertise includes Django, FastAPI, Vue.js, React, Flutter, and Tauri.

Design

Rapid prototyping and UI/UX design with Figma. Mobile-first interface design based on Material Design principles.

History

| Date | Event |

|---|---|

| 2014 | Launched loFT as sole proprietor while working full-time |

| August 2019 | loFT LLC established (Musashino-shi, Tokyo) |

| August 2020 | Selected as IT Introduction Support Provider |

| November 2020 | Subsidiary Lorchestra Inc. established |

| April 2021 | Selected as IT Introduction Support Provider (2021) |

| February 2024 | Absorbed and merged Lorchestra Inc. |

| 2025 | On-premise GPU computing infrastructure built, LLM research initiated |

| 2026 | Website renewal, technical documentation published |

As a Business Owner

I’ve automated accounting with freee and handled company registrations myself. I once went through the entire process of absorbing and merging a subsidiary I had established — visiting the Legal Affairs Bureau for guidance and working through it step by step was a great experience and genuinely enjoyable.

At one point I considered hiring engineers and scaling the company, but the weight of being responsible for employees’ livelihoods felt significant. In the end, I decided a one-person company suits me best.

Through years of closing books and managing finances, I’ve deepened my understanding of P&L and balance sheets. That experience now helps me make short- to medium-term investment and capital allocation decisions.